doi: 10.56294/dm2024.569

ORIGINAL

Development of an Intelligent Learning Evaluation System Based on Big Data

Desarrollo de un Sistema Inteligente de Evaluación del Aprendizaje Basado en Big Data

Deviana Ridhani1 ![]() *, Krismadinata2

*, Krismadinata2 ![]() *, Dony Novaliendry3

*, Dony Novaliendry3 ![]() *, Ambiyar4

*, Ambiyar4 ![]() *, Hansi Effendi2

*, Hansi Effendi2 ![]() *

*

1Universitas Negeri Padang, Technical and Vocational Education and Training, Padang, Indonesia.

2Universitas Negeri Padang, Department of Electrical Engineering, Padang, Indonesia.

3Universitas Negeri Padang, Department of Electronics Engineering, Padang, Indonesia.

4Universitas Negeri Padang, Department of Mechanical Engineering, Padang, Indonesia.

Cite as: Ridhani D, Krismadinata, Novaliendry D, Ambiyar, Effendi H. Development of an Intelligent Learning Evaluation System Based on Big Data. Data and Metadata. 2024; 3:.569. https://doi.org/10.56294/dm2024.569

Submitted: 07-05-2024 Revised: 04-09-2024 Accepted: 23-12-2024 Published: 24-12-2024

Editor: Adrián

Alejandro Vitón-Castillo ![]()

Corresponding author: Krismadinata *

ABSTRACT

The increasing need for effective learning evaluation in higher education is driving the development of big data-based systems to provide comprehensive insights. This research aims at developing an Intelligent Learning Evaluation System (ILES) to support team teaching and monitor the effectiveness of the learning process through pre-tests, post-tests and periodic evaluations. The system was developed using the Agile methodology, including iterative stages of requirements gathering, design, development, testing and implementation. Codeigniter is used for backend development, PostgreSQL as database. This system enables dynamic monitoring and evaluation of teaching performance and student learning outcomes. The finding showed that the Real-time data visualization and user-friendly dashboards improve decision making for faculty and administrators. Testing shows increased engagement and actionable insights for performance improvement. ILES demonstrates the potential of big data in higher education by enabling data-based decision making and driving continuous improvement in teaching and learning. Future research will explore integration with broader institutional systems and its scalability.

Keywords: Smart Learning Evaluation; Student Performance Analysis; Advanced Data Analytics; Real-time Feedback; Big Data.

RESUMEN

La creciente necesidad de una evaluación eficaz del aprendizaje en la educación superior está impulsando el desarrollo de sistemas basados en big data para proporcionar conocimientos integrales. Esta investigación tiene como objetivo desarrollar un Sistema Inteligente de Evaluación del Aprendizaje (ILES) para apoyar la enseñanza en equipo y monitorear la efectividad del proceso de aprendizaje a través de pruebas previas, posteriores y evaluaciones periódicas. El sistema fue desarrollado utilizando la metodología Agile, incluyendo etapas iterativas de recopilación de requisitos, diseño, desarrollo, prueba e implementación. Codeigniter se utiliza para el desarrollo backend, PostgreSQL como base de datos. Este sistema permite el seguimiento y la evaluación dinámica del desempeño docente y de los resultados del aprendizaje de los estudiantes. El hallazgo demostró que la visualización de datos en tiempo real y los paneles fáciles de usar mejoran la toma de decisiones de profesores y administradores. Las pruebas muestran un mayor compromiso y conocimientos prácticos para mejorar el rendimiento. ILES demuestra el potencial del big data en la educación superior al permitir la toma de decisiones basada en datos e impulsar la mejora continua en la enseñanza y el aprendizaje. Las investigaciones futuras explorarán la integración con sistemas institucionales más amplios y su escalabilidad.

Palabras clave: Evaluación del Aprendizaje Inteligente; Análisis del Desempeño Estudiantil; Análisis de Datos Avanzado; Comentarios en Tiempo Real; Grandes Datos.

INTRODUCTION

In the rapidly evolving digital era, big data has become one of the most influential technological innovations in various sectors, including education.(1,2) This technology enables the efficient collection, storage and analysis of large amounts of data, opening up new opportunities to improve the quality and effectiveness of the teaching and learning process.(3) In the context of education, data generated from various student activities, such as attendance records, exam results, and feedback, can be used to dig deep insights into individual learning and performance patterns.(4,33)

The utilization of big data in education creates a new, more targeted and data-driven approach to evaluating learning outcomes and designing more effective interventions.(5,6) The integration of big data technologies in the education sector has revolutionized the way we approach the assessment of learning outcomes.(7,34) Traditional evaluation methods have proved inadequate in capturing the diverse nature of student learning and performance, creating an urgent need for more sophisticated and comprehensive assessment tools. To address this gap, researchers are turning to the development of intelligent learning evaluation systems that harness the power of big data analytics.(8,35,36,37,38)

Monitoring and evaluation (Monev) is an important part of the learning process in higher education(9). Monev Learning in the duties of the Quality Assurance Unit on a campus requires monev activities to evaluate all learning activities from the beginning of the semester until the end of the semester ends, one of which is determining the evaluation of lecturer performance according to their field of expertise. Learning evaluation is the main key to ensuring the effectiveness of the education system in providing quality learning experiences to students.(10) Quality assurance in higher education emerged when higher education institutions faced difficulties in monitoring the quality level of their services.(11)

Performance evaluation is expected so that the professionalism of a lecturer can be improved and developed continuously according to the provisions, namely based on functional position and suitability of field of expertise.(12,39) To achieve the success of lecturers’ learning to students, student satisfaction is the basis of management decisions so that universities must continue to evaluate the performance of all lecturers who teach existing courses.(13) However, the monitoring and evaluation system in figure 1 that has been implemented at Universitas Negeri Padang (UNP) has several problems. https://evaluasi.unp.ac.id.

Figure 1. Universitas

Negeri Padang Evaluation Information System Problem Overview

Source: (https://evaluasi.unp.ac.id)

However, the monitoring and evaluation system that has been implemented at Universitas Negeri Padang has several problems. Some of the problems of the evaluation system are the first problem faced that the evaluation system can only be accessed at the end of each semester, resulting in delays in data collection and analysis of evaluation results.(14) This hampers the faculty’s ability to respond to necessary changes in real-time and reduces flexibility in adjusting learning strategies during the semester. Furthermore, the current evaluation system has not been integrated with the online attendance system in the engineering faculty. This causes data gaps between student attendance and learning evaluation results. Integration with the online attendance system will enable a more comprehensive analysis of the link between student attendance and lecturer performance as educators.(15)

Another problem is that it has not implemented single sign-on (SSO) for login which is a bottleneck for users whether lecturers, students or administrative staff.(16) Users have to manage various login credentials to access the evaluation system and other platforms in the faculty of engineering Universitas Negeri Padang (UNP) which causes complexity and increases security risks. In addition, the current system lacks an effective analysis mechanism to evaluate the effectiveness and efficiency of the evaluation process. Without structured analysis, the engineering faculty could not fully understand the outcomes and weaknesses of the existing evaluation system as well as the opportunities for improvement and enhancement required.(19) With the increasing amount of data generated from evaluation results, the application of big data technology is required, the evaluation system will fail to utilize the potential of the available data to provide deeper insights into learning performance. Big data analysis can identify trends patterns, and issues that are not visible through traditional evaluation approaches.(17)

A learning evaluation system is a platform that allows students or related parties to provide assessment and feedback on the quality of the learning process in order to achieve educational goals.(18,19) The quality of this system is measured by its ease of use, reliability, and responsiveness to user needs, while the level of usage includes the extent to which students actually utilize it.(20,40)

Based on a number of problems faced at the Faculty of Engineering, Universitas Negeri Padang that affect the effectiveness of the learning monitoring and evaluation system that has been implemented, an intelligent big data-based learning monitoring and evaluation system was designed and built. Through this intelligent system, monitoring can be accessed in real-time.(21,39) Thus there is an increase in the quality of learning for the next time. The proposed research scheme is Master Thesis Research. This research refers to the field of Digital Economy by applying at the Faculty of Engineering Universitas Negeri Padang (UNP).

Big data technology by collecting and analyzing large amounts of data in real-time. There is growing recognition of the application of Big Data applications in Intelligent Evaluation Systems, given the potential benefits it offers in improving learning outcomes and education management. Big Data technologies have the potential to improve various aspects of education, including learning analytics, data mining, predictive analytics, behavioral analytics, and academic analytics. Consequently, it can pave the way for the development of innovative online education systems, real-time data collection, and optimization of education management, which will ultimately improve learning efficiency and student quality. In this context, the need for effective and efficient data collection and analysis is increasingly important, making it more urgent to design an intelligent learning evaluation system based on big data.

The novelty of the research from the aspect of approach is the comprehensive approach used in designing this intelligent system by combining multidisciplinary elements namely education, big data analysis, software engineering. This approach also focuses on non-academic aspects that are important in learning, namely student participation. The advantage of this intelligent system is the use of big data technology to collect, store, and analyze large amounts and diverse learning data so as to provide new insights into the learning process. The technology in building this intelligent system using the codeigniter framework provides advantages in terms of scalability, security and flexibility. This framework has proven to be stable and popular in web development, making it easier to develop and maintain intelligent systems. The use of PostgreSQL database provides advantages in terms of strong data management support for high performance, and is able to handle large data volumes efficiently. By combining these advantages, the design of this big data-based learning evaluation intelligent system becomes a holistic, effective, efficient solution in solving learning evaluation problems and improving the quality of learning at the Faculty of Engineering, Universitas Negeri Padang compared to previous research or existing systems.

METHOD

The research method in designing and creating a smart system for monitoring and evaluating big data-based learning uses the R&D (Research and Development) method with an agile model.(22,23) This research was conducted at the Faculty of Engineering, Universitas Negeri Padang. In the implementation of the research, the method used is the Agile model which consists of several stages, namely requirement, design, develop, test, deploy, review, launch.(24) The stages of this agile model are seen in figure 2.

Figure 2. Agile Research Model

|

Table 1. Stages of Intelligent System Monitoring and Evaluation |

||

|

Stage |

Activity |

Achievement Target |

|

Requirement |

• Identify needs and problems • Analyzing the evaluation information system • Conduct interviews with users, surveys, and analyze related documentation. Analyzing the system against users to find out in detail the software requirements by users, namely lecturers and students. |

• Obtaining data and information about the current attendance system • Obtain data requirements for system design |

|

Design |

• The design stages of architecture design, business processes and database design. Design using UML (Unified Modeling Language) modeling use case diagram, class diagram and ERD (Entity Relational Diagram). |

• Database design • UML application design • UI UX design |

|

Develop |

• Implementation process of the application design, namely coding and database. • Design for database management with PostgreSQL • System development with CodeIgniter framework for front-end and back-end, then bootstrap as user interface. |

• Mobile attendance system as backend • Mobile attendance application as front end |

|

Test |

• System testing is done using a black box. |

• Application validation data |

|

Deploy |

• Upload the application to a web hosting so that it can be accessed by users via the internet. • Presence information system from localhost to hosting side is accessed via SSH and Git Version Control. |

• Information systems can be accessed via search engines • Applications can be downloaded at playstore |

|

Review |

• The evaluation stage of the results provided and provides feedback to the developer. • Revision and evaluation will result in a description of feedback from users regarding the application already used by users. • If there is feedback, a revision is made from the developer’s side by holding maintenance on the application. • After that, improvements are made, then the results will be evaluated again. |

• A system that continues to run optimally and continues to evolve as needed. |

|

Launch |

• Launching the system into production or end-user environments. • User training, infrastructure setup and initial performance monitoring. |

• Information systems can be accessed through search engines • Applications can be downloaded at playstore • Smooth and integrated system implementation |

Requirement

This stage is to identify needs and problems, analyze evaluation information systems, interviews with users, surveys, and analysis of documentation, analysis of software requirements. At this stage, researchers identify needs, among others:

• Analyze the limitations of current evaluation monitoring

• Analyze the stakeholders who will use the current evaluation system

• Analyze the requirements of the tool in functional (what the system should do) and non-functional (performance, security, usability) categories.

Identifying Needs and Issues

• In this phase, the project team works with users (lecturers and students) to identify the problems and needs that the system should solve.

• Data collection can be done through group discussions, interviews, or surveys to understand users’ needs related to learning evaluation.

Analyze the Evaluation Information System

• The project team needs to analyze the current evaluation information system.

• This analysis includes reviewing the current workflow, data used, and evaluation methods.

• The goal is to identify weaknesses and opportunities for improvement in the existing evaluation system.

User Interviews, Surveys, and Documentation Analysis

• Conduct interviews with key users such as lecturers and students to understand their needs and expectations from the learning evaluation system.

• Surveys can be helpful in gathering broader information about users’ experiences and preferences.

• Analyze related documentation, such as curriculum, syllabus, and learning data, to gain deeper insight into evaluation needs.

Software Requirements Analysis by Users

• After collecting data from interviews, surveys, and analysis, the project team should analyze the software needs desired by the users.

• This includes understanding the types of reports and analysis required, integration with other learning systems, and the desired level of ease of use.

• The results of this analysis will be the basis for the development of the intelligent learning evaluation system.

Design

The design stages of architecture design, business processes and database design. Design using UML (Unified Modeling Language) modeling use case diagram, class diagram and ERD (Entity Relational Diagram).

Figure 3. Data-driven Learning Monitoring and Evaluation Smart System Architecture

The architecture of the Smart Monitoring and Learning Evaluation System integrates multiple layers to ensure efficient data processing, analysis, and visualization. Data is collected from internal sources, such as mobile and web applications, and transmitted in real-time through APIs to cloud storage for safekeeping. In the data preprocessing layer, tools like Apache Spark are employed to clean and prepare the raw data. Processed data is then queried and organized using frameworks such as Spark SQL and Hive in the data query layer. Advanced analytics, including machine learning and data mining, extract valuable insights from the data, which are visualized through interactive BI reporting tools like Chart.js. The application layer provides user-friendly access via mobile and web interfaces, enabling seamless monitoring and evaluation. This architecture supports effective decision-making and continuous improvement.

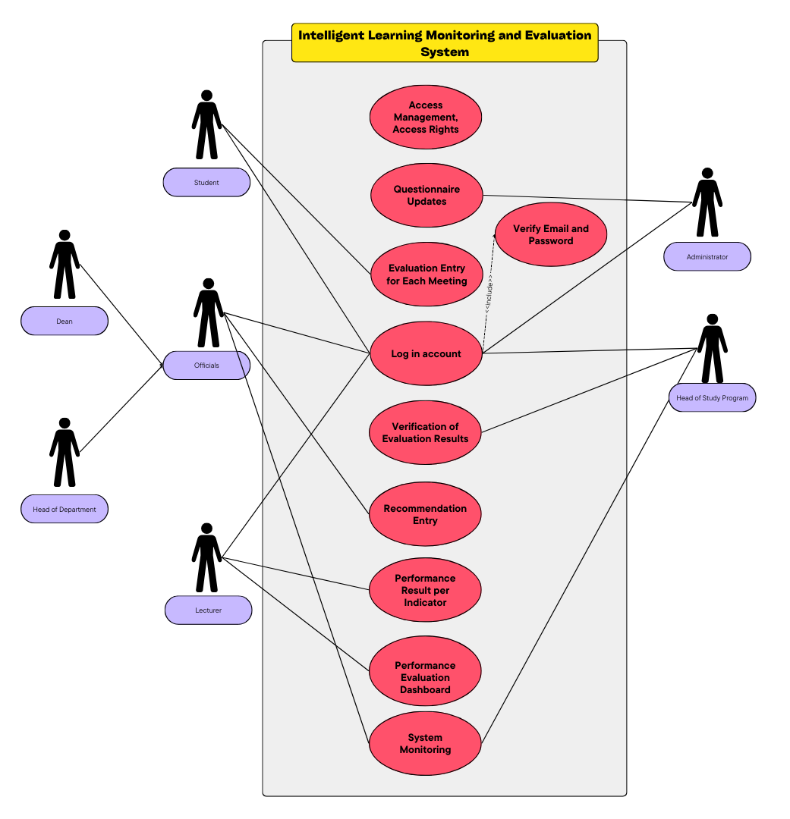

The Use Case Diagram in figure 4 in the context of the Intelligent Learning Monitoring and Evaluation System with the CodeIgniter framework and PostgreSQL database at the Faculty of Engineering UNP will describe the interaction between users (actors) and the system in terms of the functionality provided by the system.

Figure 4. Use Case Diagram of Big Data-based Learning Evaluation Monitoring Smart System

The diagram depicts an Intelligent Learning Monitoring and Evaluation System which involves several entities, namely students, lecturers, admin, and PIC IT (Person in Charge IT). Students use the system to evaluate learning outcomes, while lecturers can access student evaluation and performance data. Admin is responsible for account management, access updates, and system monitoring, while PIC IT handles user email and password verification. The main functions of the system include account log in, evaluation of learning outcomes, recapitulation via the performance evaluation dashboard, and monitoring of the system as a whole. Each element is connected in an integrated manner to ensure efficiency in the learning monitoring and evaluation process.

Develop

To ensure a successful system deployment, the process begins with thorough development and testing phases. Once completed, server infrastructure and hardware required for implementation are prepared. The initial iteration of the system is deployed in stages to the production environment, with close monitoring of performance and technical support provided as needed. After deployment, feedback is collected from users, including lecturers, students, and admins, to assess the system’s effectiveness. Bug monitoring and tracking tools can help identify issues or areas for improvement. This feedback is then evaluated to address identified issues, improvement needs, or additional features, ensuring the system’s performance, security, and user experience are optimized. The development process continues with further iterations, involving testing and deploying updated versions based on user feedback and adjustments until the system achieves stability and desired performance. Post-deployment, regular monitoring and maintenance are crucial to ensure system safety and optimal performance, while updates are made in response to technological advancements, user needs, or legislative changes. Following the Agile model, the system undergoes continuous renewal, with regular improvements and new features added to maintain its relevance and effectiveness.

Test

The testing stage is a crucial phase in the system development lifecycle to ensure the system functions as intended and meets all requirements. It begins with unit testing, which verifies the functionality of individual components or modules. This is followed by integration testing to ensure proper interaction between combined modules. System testing is then conducted to validate the system as a whole, ensuring it meets specified requirements. User Acceptance Testing (UAT) involves end-users to confirm the system performs as expected in real-world scenarios. Additionally, performance testing evaluates the system’s speed, reliability, and scalability under various workloads, while security testing ensures protection against vulnerabilities and threats. Completing these tests thoroughly helps identify and resolve issues, ensuring a reliable and efficient system before deployment.

Deploy

To make the application accessible to users via the internet, it must be uploaded to a web hosting platform. This process involves transferring the application from the localhost environment to the hosting server, ensuring all configurations are properly set. The transfer is typically performed using secure protocols such as SSH, which provides a secure connection for managing files on the hosting server. Additionally, Git Version Control can be utilized to streamline the deployment process, enabling efficient version tracking and synchronization between the local environment and the hosting side. This ensures a seamless transition and easy management of updates.

Review

The evaluation stage focuses on reviewing the application’s performance and gathering feedback from users to provide valuable insights to the developer. This stage involves analyzing user feedback to identify issues, improvement areas, or additional features required. If feedback indicates necessary changes, the developer initiates revisions and conducts maintenance to address the identified concerns. Once the improvements are implemented, the application undergoes another evaluation to ensure the changes meet user expectations and enhance overall functionality. This iterative process helps refine the application and maintain its effectiveness over time.

Launch

The final stage involves launching the system into production or end-user environments, making it fully operational and accessible to its intended users. This phase includes setting up the necessary infrastructure to ensure the system operates seamlessly in its new environment. Additionally, user training is conducted to familiarize end-users with the system’s features and functionality, enabling them to use it effectively. Initial performance monitoring is also carried out to track the system’s behavior, identify any early issues, and ensure optimal performance from the outset.

RESULT AND DISCUSION

The results of developing an intelligent system for monitoring and evaluating learning in higher education can be seen as follows:

Login page

The Login Page serves as a user verification page before granting access rights to the evaluation monitoring intelligent system based on the access rights of each user. Users only need to login once in one service, and then they can access many other applications or systems connected to SSO without the need to re-login. Users enter a username and password to be able to access the intelligent monitoring and evaluation system, according to their respective access rights. If the username and password entered are correct, the user will be directed to the dashboard page. If the username and password entered are incorrect, the user will receive an error message and remain on the login page to try again.

Dashboard page

The learning monitoring and evaluation dashboard page in higher education provides an overview of learning progress, student performance, course evaluations, and other relevant information to monitor and evaluate the effectiveness of teaching and learning activities can be seen in Figure 5.

Figure 5. Dashboard Form

The dashboard is simple and informative, allowing users to see key information and evaluation statistics in a concise manner. The colors used are clear, with icons and text aiding quick identification of each data category. This design lends itself to quick access for users with superuser access levels. This section of the dashboard displays a summary of the main data, namely total lecturers of 270, total students of 40, total programs of 10, and total courses of 2. Lecturer Performance Evaluations displays total lecturer performance evaluations (12) with a percentage increase of +2,02 %. Learning Evaluation displays total learning evaluations (35) with a percentage decrease of -0,24 %.

Satisfaction survey display by month the results of user or stakeholder satisfaction surveys on a monthly basis. This data usually includes satisfaction scores, trends in increasing or decreasing satisfaction compared to the previous month, as well as other indicators such as satisfaction with service, performance, or quality of user experience. In the dashboard view, satisfaction surveys can be presented in the form of graphs or information cards that show the number of respondents, percentage of satisfaction, and changes in percentage of satisfaction from month to month. In addition, this data can be segmented by user category (e.g. lecturers, students, staff) for a more in-depth analysis of the satisfaction levels of each group.

The study program table displays data for each study program that includes several important columns such as the name of the study program, the evaluation progress level, and the category of the progress. Evaluation Progress displays the level of evaluation completion in the form of a percentage (%), which reflects how far the study program has completed the evaluation process. The progress categories for the evaluation process based on the percentage achievement level are not yet started, beginning, low progress, medium progress, almost finished, finished. These categories help identify the level of evaluation completion and make it easier to monitor each study program based on its achievements.

The Best Lecturers section displays data about lecturers, including their names and the classes they teach, as well as their progress on evaluations. This helps to highlight lecturers based on their evaluation progress, making it easier to identify lecturers with high performance and consistent progress. This data assists in recognizing top-performing lecturers and identifying areas where additional support or focus may be needed to achieve evaluation goals.

The New Student table is used to record information related to new students, including their names, courses taken, as well as the status of completing evaluations for midterms and finals. Each row in the table lists the student’s name, followed by the courses they are taking. The mid-semester completion status indicates whether the student has completed the evaluation, while the final completion status indicates the same for the final evaluation. With this table, academic managers can easily monitor the evaluation completion progress of each new student, so that they can identify students who have fulfilled their evaluation obligations as well as those who may need more attention to complete the evaluation completion that has not yet been done.

The Evaluation List table is designed to record important information related to evaluations conducted by students. The table includes columns listing the student’s name, course taken, evaluation category, class, lecturer name, and evaluation status. Each row in the table shows the student’s name, followed by the course taken and the type of evaluation, whether it is for midterm or final. The class column reflects the class in which the student is enrolled, while the lecturer name column mentions the lecturer teaching the course. The evaluation status provides information about the progress of completing the evaluation, whether it is still in process (In Progress) or has been completed (Progress). With this table, academic managers can easily monitor the progress of student evaluations, provide a clear picture of the ongoing evaluation status, and take the necessary steps to support students in fulfilling their evaluation obligations.

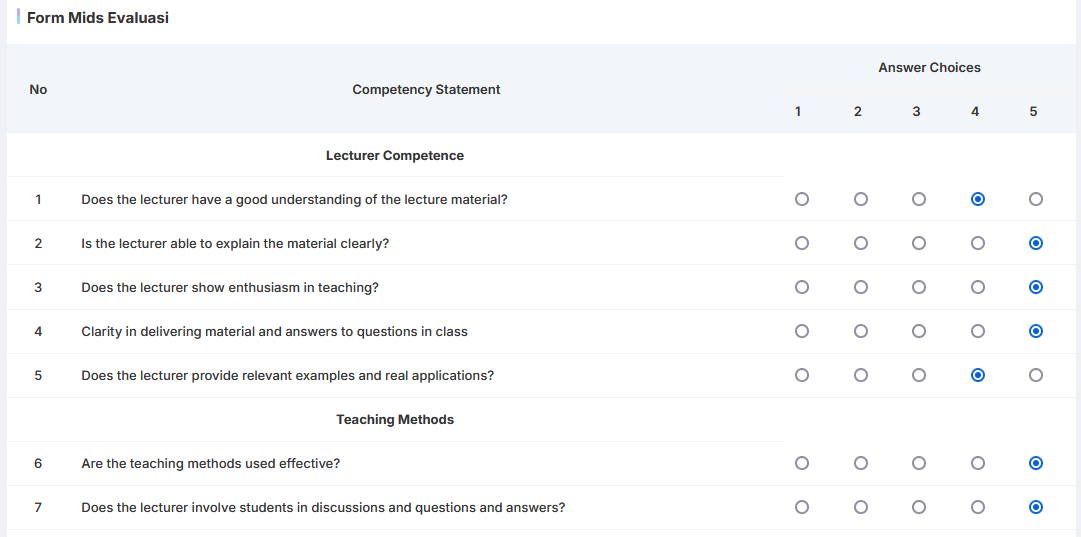

Mid Evaluation page

The mid evaluation page will be displayed after the student user enters the main menu and selects the mid evaluation page menu. This page will appear according to the schedule determined by the academic staff. Mid evaluation page can be seen in figure 6 below.

Figure 6. Mid Evaluation Page

The Mid-Evaluation Form is designed to collect feedback from students regarding the course they are enrolled in at mid-semester. In the assessment of lecturer performance, students are asked to give a score from 1 to 5 for several aspects, such as material delivery, interaction skills, and lecturer’s ability to help students. Each aspect of the assessment provides an overview of the teaching effectiveness received by students.

This form also provides a space for open feedback, where students can share the positives they gained from the course as well as areas for improvement. In addition, students can provide suggestions for future teaching, which lecturers can use to improve their teaching methods.

At the end of the form, there is an option to mark the completion status, whether the student has completed it or not. Thus, this form not only serves as an evaluation tool, but also as a means of communication between students and lecturers, aiming to improve the quality of learning and the overall academic experience.

Test results

The testing phase involved black box testing that focused on the functionality of the evaluation monitoring intelligent system being developed. During the testing stage, all menus have been functioning properly, and each menu effectively demonstrates the performance of this application.

Lecturer Performance

The Lecturer Performance is designed to display lecturers’ performance in teaching courses, focusing on mid-semester evaluation scores from two consecutive years, 2024 and 2025. Each row in the table lists the name of the course taught and the name of the lecturer who taught it. In addition, the table includes the evaluation scores for the midterms of 2024 and 2025, which provides an overview of the lecturers’ performance in teaching over the period. The improvement column shows the comparison between the mid 2025 score and the mid 2024 score; a positive number indicates an improvement in performance, while a negative number indicates a decline. This table allows academics to analyze lecturer performance over time, as well as identify areas where lecturers have made progress or require further attention, so that appropriate improvement efforts can be made.

Feedback and Survey Analysis

The Feedback and Survey Analysis is designed to present the results of analyzing feedback and surveys from students regarding the courses they took. The table includes some important information, such as student name, course name, satisfaction average, feedback average, and total surveys received. Each row in the table shows the name of the student and the course evaluated. The satisfaction average reflects the average score of the student’s level of satisfaction with the course, usually on a scale of 1 to 5, while the feedback average depicts the average score of the feedback given by the student on a particular aspect. In addition, total surveys received shows the number of surveys collected for each course. With this table, academics can get an overall picture of the level of student satisfaction and feedback, which is very useful for evaluating the quality of teaching and learning experiences. This analysis can also be used to formulate necessary corrective measures based on student feedback, so as to improve the quality of teaching in the future.

This table presents the results of the blackbox tests performed on the system used by the administrators. This table includes various test parameters designed to evaluate the functionality and reliability of the system from an end-user perspective, without considering the internal implementation.

|

Table 2. Blackbox Testing Results for Administrators |

||||||

|

No |

Administrator Features |

Test Description |

Test Input |

Expected Output |

Testing Results |

Status |

|

1 |

User Management |

Ensure administrators can add, delete, or manage user accounts (lecturers, students). |

Add user new, delete user long |

User is successfully added, the user long removed, stored changes |

User management works done |

Successful |

|

2 |

Settings Access Rights |

Ensure administrators can set access rights user as per role (admin, lecturers, students). |

Set right access to various user |

Right access set in accordance with role: admin (full access), lecturer (access data students), student (evaluation alone) |

Right access set as per specifications |

Successful |

|

3 |

Integration System |

Testing whether administrators can integrate system Evaluation with system academic more (grades, attendance). |

Connection with system academic |

System integrated with data from system academic by automatic and updated in real- time |

Integration successful without problem |

Successful |

|

4 |

Monitoring System Performance |

Ensure administrators can monitor performance and performance system, including use resources (CPU, memory). |

Access page monitoring system |

Page Monitoring show use resources, amount user Active, and statistics performance more |

Information performance system display correctly |

Successful |

|

5 |

Backup and Restore Data |

Testing feature backup and restore data to maintain security data evaluation which saved at system. |

Create backups, Data and restore data Previous |

Backup works stored, data works restored without anyone missing |

Backup and restore walk well |

Successful |

|

6 |

Report, Evaluation, Collective |

Ensure administrators can resulting in report evaluation collective from whole faculty or major. |

Inquiry report evaluation whole faculty |

Report evaluation collective whole faculty/degree produced in format PDF or Excel as per request |

Report works generated as per specifications |

Successful |

|

7 |

Settings Algorithm Evaluation |

Ensure administrators can configure or modify evaluation algorithm as per with needs institutions |

Set parameters algorithm evaluation |

Parameters Algorithm works changed and saved, algorithm goes accordingly with setting |

Algorithm goes accordingly parameters |

Successful |

|

8 |

Management Evaluation Data |

Ensure administrators can manage data Evaluation, such as delete or fixing data which is wrong |

Delete or edit data evaluation |

Evaluation data works deleted or fixed in accordance with request |

Management data running smoothly |

Successful |

|

9 |

Notifications and Communication System |

Testing whether administrators can set notifications automatically for user related evaluation results or system updates |

Set up and Send notification automatically |

Notification works Retrieved by automatically to user Related result evaluation or update system |

Notifications sent correctly |

Successful |

|

10 |

Monitoring Activity Log |

Ensure administrators can see log user activity for purposes audit or security |

Access log activity user |

Activity log Complete shown including data access and change which is carried out by user |

Activity log works shown |

Successful |

Table 2 displays the results of blackbox testing for administrators on the system covering various important features. Administrators have access to the User Management feature, which allows them to effectively manage user accounts. The Access Settings feature provides the ability to configure and customize system settings as needed. With the Access Rights Feature, administrators can determine the access levels of other users, ensuring data security and privacy. System Integration enables collaboration with other applications, while System Performance Monitoring provides real-time information on system efficiency and effectiveness. The Data Backup and Restore feature is essential for maintaining data continuity, and the Reports and Evaluation feature provides in-depth analysis of system performance. Administrators can also manage evaluation algorithm settings and management evaluation data, which helps in data-driven decision making. The Notification and Communication System ensures good information flow between users, and Activity Log Monitoring allows administrators to track user activity, supporting transparency and accountability. All these features work well, showing successful status in blackbox testing.

The development of an intelligent learning evaluation system powered by big data represents a transformative step in modern education.(25) Such systems leverage advanced data analytics to assess student performance, predict learning outcomes, and personalize educational experiences. The integration of big data technologies enables institutions to shift from traditional, one-size-fits-all evaluation methods to more nuanced and adaptive approaches.(26) This discussion explores the potential benefits, challenges, and future implications of this innovative system.

Big data-driven evaluation systems offer unparalleled opportunities to enhance learning outcomes.(27) By analyzing vast datasets, these systems can identify trends in student behavior, highlight strengths, and detect areas needing improvement. For instance, real-time feedback based on performance metrics empowers both educators and learners to make timely adjustments to teaching methods and study strategies.(28) Moreover, such systems facilitate personalized learning by tailoring educational content to individual needs, fostering a more engaging and effective learning environment. The scalability of these technologies makes them especially valuable for large educational institutions.

Despite their potential, intelligent learning evaluation systems face several challenges. Privacy concerns remain a critical issue, as collecting and analyzing student data may expose sensitive information if not handled securely.(29) Additionally, integrating these systems into existing educational frameworks can be technically and financially demanding, particularly for underfunded schools or institutions with outdated infrastructure. Resistance from educators unfamiliar with advanced technology may also hinder widespread adoption. Addressing these barriers requires robust cybersecurity measures, adequate funding, and comprehensive training programs for stakeholders.

One key consideration is the impact of such systems on educational equity. While they have the potential to bridge gaps by providing underserved students with access to tailored learning resources, they could also exacerbate inequalities if certain schools lack the resources to implement them effectively. The risk of algorithmic bias in evaluating diverse student populations must be mitigated to ensure fair and accurate assessments. A commitment to inclusivity and transparency in the design of these systems is essential to prevent disparities.(30)

The future of intelligent learning evaluation systems lies in continuous improvement through advanced technologies like artificial intelligence (AI) and machine learning (ML).(31) These advancements could enable systems to provide deeper insights, such as predicting career trajectories or developing adaptive learning pathways in real time. Collaborative efforts between educators, technologists, and policymakers will be vital to shaping systems that are both effective and ethical. Regular audits and feedback loops should be incorporated to refine algorithms and align them with evolving educational standards.(32)

CONCLUSIONS

This research successfully designed and implemented a big data-based intelligent learning evaluation system using CodeIgniter Framework. This system allows lecturers and students to evaluate learning efficiently and effectively. The application of Agile model in system development provides high flexibility and adaptability. The iterative and participatory process enables system customization based on user feedback. The system utilizes big data to collect and analyze learning evaluation data. The database salttructure designed using ERD and normalization provides efficient data management and supports accurate data analysis. This intelligent learning evaluation system also makes it easier to monitor and analyze student learning outcomes, allowing lecturers to identify areas of improvement and adjust teaching methods accordingly. The CodeIgniter framework proved to be the right choice for the development of this system thanks to its speed, reliability and ease of use. Overall, this system contributes to the improvement of learning quality.

REFERENCES

1. Chen NS, Yin C, Isaias P, Psotka J. Educational big data: extracting meaning from data for smart education. Interactive Learning Environments. 2020 Feb 17;28(2):142–7.

2. Kravchenko H, Ryabova Z, Kossova-Silina H, Zamojskyj S, Holovko D. Integration of information technologies into innovative teaching methods: Improving the quality of professional education in the digital age. Data and Metadata. 2024 Jul 12;3:431.

3. Xin X, Shu-Jiang Y, Nan P, ChenXu D, Dan L. Review on A big data-based innovative knowledge teaching evaluation system in universities. Journal of Innovation & Knowledge. 2022 Jul;7(3):100197.

4. Jing Y, Zhao L, Zhu K, Wang H, Wang C, Xia Q. Research Landscape of Adaptive Learning in Education: A Bibliometric Study on Research Publications from 2000 to 2022. Sustainability. 2023 Feb 8;15(4):3115.

5. Sorour A, Atkins AS. Big data challenge for monitoring quality in higher education institutions using business intelligence dashboards. Journal of Electronic Science and Technology. 2024 Mar;22(1):100233.

6. Bhojan R, Rajagopal M, Ramesh R. Big Data De-duplication using modified SHA algorithm in cloud servers for optimal capacity utilization and reduced transmission bandwidth. Data and Metadata. 2024 Mar 30;3:245.

7. Baig MI, Shuib L, Yadegaridehkordi E. Big data in education: a state of the art, limitations, and future research directions. International Journal of Educational Technology in Higher Education. 2020 Dec 2;17(1):44.

8. Bensattalah A, Belhadji Y. Evaluating Sensor-Derived Data Quality for IoT-based Temperature Monitoring. In 2024. p. 103–16. Available from: https://www.atlantis-press.com/doi/10.2991/978-94-6463-496-9_9

9. Swacha J, Kulpa A. Evolution of Popularity and Multiaspectual Comparison of Widely Used Web Development Frameworks. Electronics (Basel). 2023 Aug 23;12(17):3563.

10. Nurrahman A, Cahyani MD, Nurfatmawati L, Wibowo H. Developing The Instrument of E-Learning Evaluation: Study at Vocational School. Journal of Office Administration : Education and Practice. 2023 Dec 7;3(3):163–74.

11. Javed Y, Alenezi M. A Case Study on Sustainable Quality Assurance in Higher Education. Sustainability. 2023 May 17;15(10):8136.

12. Broumi S, Sundareswaran R, Shanmugapriya M, Singh PK, Voskoglou M, Talea M. Faculty Performance Evaluation through Multi-Criteria Decision Analysis Using Interval-Valued Fermatean Neutrosophic Sets. Mathematics. 2023 Sep 5;11(18):3817.

13. Teng Y, Zhang J, Sun T. RETRACTED: Data‐driven decision‐making model based on artificial intelligence in higher education system of colleges and universities. Expert Syst. 2023 May 31;40(4).

14. Chuka Anthony Arinze, Olakunle Abayomi Ajala, Chinwe Chinazo Okoye, Onyeka Chrisanctus Ofodile, Andrew Ifesinachi Daraojimba. EVALUATING THE INTEGRATION OF ADVANCED IT SOLUTIONS FOR EMISSION REDUCTION IN THE OIL AND GAS SECTOR. Engineering Science & Technology Journal. 2024 Mar 10;5(3):639–52.

15. Aziz AA, Yusof KM, Yatim JM. Evaluation on the Effectiveness of Learning Outcomes from Students’ Perspectives. Procedia Soc Behav Sci. 2012 Oct;56:22–30.

16. Huber E, Harris L, Wright S, White A, Raduescu C, Zeivots S, et al. Towards a framework for designing and evaluating online assessments in business education. Assess Eval High Educ. 2024;49(1):102–16.

17. Cui Y, Ma Z, Wang L, Yang A, Liu Q, Kong S, et al. A survey on big data-enabled innovative online education systems during the COVID-19 pandemic. Journal of Innovation & Knowledge. 2023 Jan;8(1):100295.

18. Liu M, Yu D. Towards intelligent E-learning systems. Educ Inf Technol (Dordr). 2023 Jul 12;28(7):7845–76.

19. Ñañez-Silva MV, Lucas-Valdez GR, Larico-Quispe BN, Peñafiel-García Y. Education for Sustainability: A Data-Driven Methodological Proposal for the Strengthening of Environmental Attitudes in University Students and Their Involvement in Policies and Decision-Making. Data and Metadata. 2024 Jan 1;3:448.

20. Singh H, Miah SJ. Smart education literature: A theoretical analysis. Educ Inf Technol (Dordr). 2020 Jul 29;25(4):3299–328.

21. Gonzalez-Argote J, Lepez CO, Castillo-Gonzalez W, Bonardi MC, Cano CAG, Vitón-Castillo AA. Use of real-time graphics in health education: A systematic review. EAI Endorsed Trans Pervasive Health Technol. 2023 Apr 4;9:e3.

22. Sanad Z, Musleh Al-Sartawi AMA. Research and development spending in the pharmaceutical industry: Does board gender diversity matter? Journal of Open Innovation: Technology, Market, and Complexity. 2023 Sep;9(3):100145.

23. Sahputra Batubara H, Jalinus N, Rizal F. Developing an integrated internship application for vocational schools: aligning user-centered design with technological innovation to enhance internship experiences. Data and Metadata. 2025 Jan 1;4:447.

24. Junker TL, Bakker AB, Derks D. Toward a theory of team resource mobilization: A systematic review and model of sustained agile team effectiveness. Human Resource Management Review. 2025 Mar;35(1):101043.

25. Bhatt P, Muduli A. Artificial intelligence in learning and development: a systematic literature review. European Journal of Training and Development. 2023 Aug 14;47(7/8):677–94.

26. Rane N. Integrating Leading-Edge Artificial Intelligence (AI), Internet of Things (IoT), and Big Data Technologies for Smart and Sustainable Architecture, Engineering and Construction (AEC) Industry: Challenges and Future Directions. SSRN Electronic Journal. 2023;

27. Zheng L, Wang C, Chen X, Song Y, Meng Z, Zhang R. Evolutionary machine learning builds smart education big data platform: Data-driven higher education. Appl Soft Comput. 2023 Mar;136:110114.

28. Islam MdR, Kabir MdM, Mridha MF, Alfarhood S, Safran M, Che D. Deep Learning-Based IoT System for Remote Monitoring and Early Detection of Health Issues in Real-Time. Sensors. 2023 May 30;23(11):5204.

29. Gligorea I, Cioca M, Oancea R, Gorski AT, Gorski H, Tudorache P. Adaptive Learning Using Artificial Intelligence in e-Learning: A Literature Review. Educ Sci (Basel). 2023 Dec 6;13(12):1216.

30. Mavropoulou E, Koutsoukos M, Terzopoulos D, Petridis C. Digitising Education: Augmenting the Learning Experience with Digital Tools and AI. SSRN Electronic Journal. 2024;

31. Soori M, Arezoo B, Dastres R. Artificial intelligence, machine learning and deep learning in advanced robotics, a review. Cognitive Robotics. 2023;3:54–70.

32. Abedi EA. Tensions between technology integration practices of teachers and ICT in education policy expectations: implications for change in teacher knowledge, beliefs and teaching practices. Journal of Computers in Education. 2024 Dec 12;11(4):1215–34.

33. D. Novaliendry, T. Wibowo, N. Ardi, T. Evi, and D. Admojo, “Optimizing Patient Medical Records Grouping through Data Mining and K-Means Clustering Algorithm: A Case Study at RSUD Mohammad Natsir Solok,” Int. J. online Biomed. Eng., vol. 19, no. 12, pp. 144–155, 2023, doi: 10.3991/ijoe.v19i12.42147.

34. F. Y. Watimena et al., “Data Mining Application for the Spread of Endemic Butterfly Cenderawasih Bay using the K-Means Clustering Algorithm,” Int. J. Online Biomed. Eng., vol. 19, no. 9, pp. 108–121, 2023, doi: https://doi.org/10.3991/ijoe.v19i09.40907.

35. Ahyanuardi, U. Verawardina, D. Novaliendry, L. Deswati, and R. A. Bahtiar, “An Analysis on the Needs Assessment of Online Learning Program in Faculty of Engineering, Universitas Negeri Padang,” Pegem Egit. ve Ogr. Derg., vol. 13, no. 1, pp. 13–19, 2022, doi: 10.47750/pegegog.13.01.02.

36. M. Adri, D. Novaliendry, I. Islapirna, E. Elfina, and S. Ardayanti, “Development of android-based interactive multimedia learning in discrete mathematics courses,” AIP Conf. Proc., vol. 2798, no. 1, 2023, doi: 10.1063/5.0164277.

37. Alolayyan MN, Al- Daoud KI, Al Oraini B, Ahmmad Hunitie MF, Vasudevan A, Luo P, et al. Mathematical Model to Evaluate the Effect of Information Quality, and Management Capability on Hospital Performance. Salud, Ciencia y Tecnología. 2024;4:.979.

38. MV N, Gill C. A study on the approaches of teaching English for deaf and hard-of-hearing (DHH) students in the special higher secondary schools (HSS) in south India. Salud, Ciencia y Tecnología. 2024; 4:.1299.

39. D. Novaliendry, M. Adri, Z. Zakaria, R. Yanto, and P. Ponimin, “Effectiveness of using Gmonio as a learning media,” AIP Conf. Proc., vol. 2798, no. 1, 2023, doi: 10.1063/5.0164278.

38. N. Adi, Y. Wahdi, I. Dewi, A. Lubis, and A. Devega, “The Effectiveness of Learning Media as a Supporter of Online Learning in Computer Networking Courses”, JTIP, vol. 14, no. 3, pp. 278-283, Apr. 2022.

40. G. Swara, M. Giatman, A. Ambiyar, W. Simatupang, N. Jalinus, and R. Abdullah, “Development and Feasibility Test on Android-Based Interactive Multimedia Applications for Mathematics Learning”, JTIP, vol. 14, no. 2, pp. 106-111, Sep. 2021.

FINANCING

The authors did not receive financing for the development of this research.

CONFLICT OF INTEREST

The authors declare that there is no conflict of interest.

AUTHORSHIP CONTRIBUTION

Conceptualization: Deviana Ridhani, Krismadinata, Dony Novaliendry, Ambiyar, Hansi Effendi.

Data curation: Krismadinata.

Research: Deviana Ridhani, Krismadinata.

Methodology: Deviana Ridhani, Krismadinata, Dony Novaliendry.

Software: Deviana Ridhani.

Validation: Krismadinata, Ambiyar.

Drafting - original draft: Deviana Ridhani.

Writing - proofreading and editing: Deviana Ridhani, Dony Novaliendry.